Why Hybrid Intelligence is Vital in a Data-Driven Era

Since ChatGPT launched at the end of 2022, AI or artificial intelligence has become a buzzword applied to almost anything and everything imaginable. Although a chatbot like ChatGPT is definitely ‘Artificial’, is it actually ‘Intelligent’? Over the past year and a half or so, we have all seen, or at least heard about, how confidently wrong a Large Language Model (LLM) like ChatGPT can be. Confidently making the same mistake over and over again seems the opposite of being intelligent?

From artificial intelligence to artificial general intelligence and from artificial superintelligence to the AI singularity; although they all have ‘intelligence’ in their name, how intelligent are they really? And for that matter, what even is intelligence exactly?

What is intelligence in the first place?

If we look up the Oxford definition of ‘intelligence’ it gives us: “the ability to acquire and apply knowledge and skills”. By this definition, we could basically call all computers intelligent? When a computer is programmed, from the perspective of the computer, it is acquiring knowledge and skills. Knowledge in the form of data, and skills in the form of instructions on how and when to manipulate that data in a certain way.

Another way to look at AI, is as systems that mimic human cognitive abilities. Seen from this perspective it seems that we must add one important aspect: learning. The difference between learning and simply ‘acquiring knowledge’ is that learning requires awareness.

It is the awareness that allows us to adapt, in order to prevent repeating unwanted outcomes, such as confidently being wrong. We humans (can) learn from our mistakes, a computer and its software in their current form cannot. It is trained and their knowledge remains the same until it is updated – it doesn’t continuously update, or learn, by itself.

So intelligence in AI is best described as the imitation of human intelligence. To what extent it means that an AI is smart or only seems to be so, depends on the type or AI we’re discussing.

The types of AI

As AI is so poorly defined, let’s have a look at the different shapes and sizes in which it appears. To be clear, this is by no means a comprehensive overview, but meant to clarify how certain behaviour of certain systems can easily be mistaken for intelligence.

Reactive Machines (RM)

For example, let’s have a look at IBM’s Deep Blue, the first chess computer that beat a real human Grandmaster at the end of the 90s. A system that can beat Kasparov, a Grandmaster since 1980, surely must be intelligent?

Chess can be notoriously difficult and takes years to master, so beating a Grandmaster is impressive, however, if we explain in one sentence how a machine like Deep Blue works, we would do its developers short, but will illustrate how being impressive is not the same as being intelligent.

Deep Blue is what we would call a ‘reactive machine’. It will automatically react to a limited set of inputs that it was ‘thought’. If A then B, if C then D etc., continued billions upon billions of times. It does not ‘think’ about its next move, it simply looks it up. Impressive if you realise how many possible moves there are in chess, but there’s little intelligence at work here.

To put this performance into perspective, the computational power of this multi-million euro computer is less than that of an average smartphone, not even 20 years later.

Limited Memory Machines (LMM)

Talking about phones, T9 predictive text input as another example of a very basic form of AI. For the younger readers that never had a dumbphone, T9 stands for ‘Text on 9 keys’, and it was the way we used to type messages on the numeric keypad of phones. It is the predecessor of the many smart keyboards such as Swype or Swiftkey that can be found on all modern smartphones.

What distinguishes this kind of system is that it is enhanced with some memory and very basic learning capabilities. Although it lacks any form of understanding of language or the context in which it is used, it uses user data to improve its suggestions and it will get better over time as it ‘learns’ more about a specific task.

The way that such a system adapts to you again might seem intelligent, but on the inside it is nothing more than a form of statistics. Basically, it simply suggests the word that you have most used before. If you text someone ‘good morning’ every morning, it will not suggest ‘good evening’ when it actually is evening.

(Advanced) Machine Learning ((A)ML)

This next form of AI aims to better imitate how people learn. It has only appeared more recently as it relies heavily on advanced computer hardware. Machine Learning has become a scientific field of its own, and even explaining the difference between ‘machine learning’, ‘deep learning’ and ‘neural networks’ falls far outside of the scope of this article. What we do need to know is that this is what made techniques such as OCR (optical character recognition) possible. OCR is what allows you to convert images or scans of text into editable text, and it is how Google made tens of millions of books searchable online.

Fun fact: those old-fashioned reCAPTCHAs where you had to type in words that were given to you as a distorted image, were a way for Google to help and train its OCR in recognising hard to decipher text. This way of training, or learning, is called ‘Reinforcement Learning from Human Feedback (RLHF).

Large Language Models (LLM)

The fact that a chatbot can now actually recognize and interpret human language correctly, and, maybe even more important reply in a coherent way, is an amazing leap in technology.

Although we live in times where scientific and technological breakthroughs are far from rare, the rate at which people all over the globe have embraced ChatGPT has never been seen before.

Instagram took 30 months to reach 100 million users, Tiktok took only 9, but ChatGPT? Only TWO months. For the first time a form of AI was used by millions of people, and it comes to no surprise that this is the reason everybody is now using a very vague generic term for what is another quite specific application.

A large language model can be seen as a combination of the types we discussed before. In a way it is nothing more than T9 on steroids but not trained by the few text messages you send, but a data set of hundreds of billions, possibly a trillion words.

To put this into context: All seven books of Harry Potter books together are about 1 million words. Assuming a book is 4 cm thick, you’d need a bookshelf 40 kilometres long for a trillion words!

However, regardless of these impressive numbers, would we consider a LLM intelligent? Not really. Immediately when ChatGPT came out, so did the stories about how confidently wrong it could be. Not only could it make up information, it also lacks the possibility to ‘understand’ or reason logically.

Many examples of failures can be found. Some funny, some potentially deadly, for that one time when it suggested to mix ammonia and bleach for a refreshing drink (mixing these two creates a toxic gas).

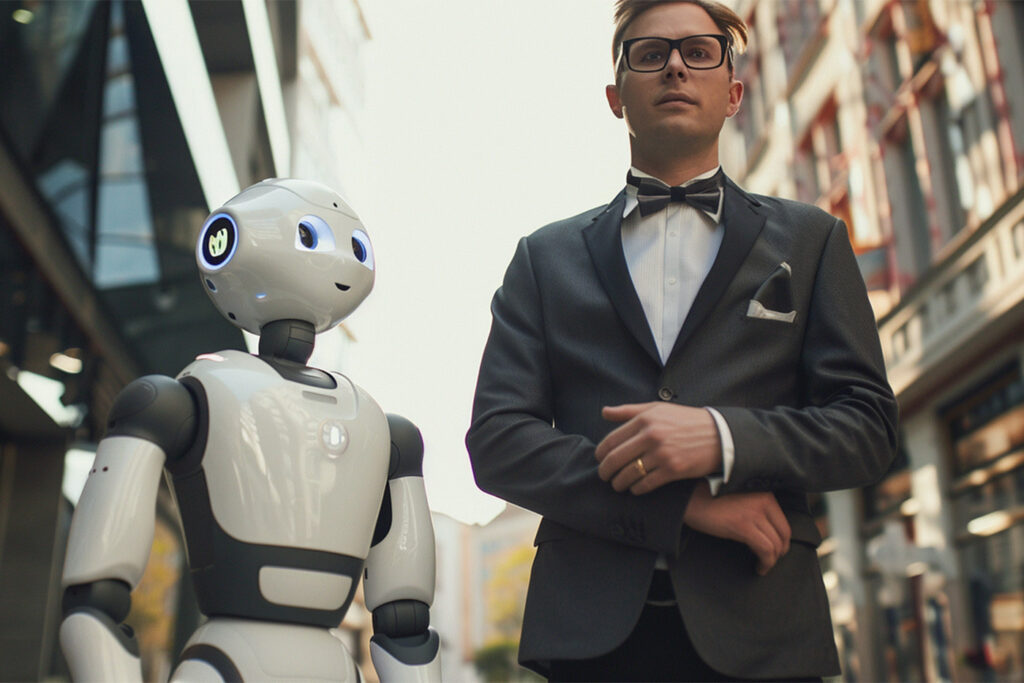

Hybrid systems

Although all of the different types of AI each have their flaws, we must not underestimate how useful they can be. The sheer amount of data they can process is almost hard to imagine and when properly managed, the accuracy and quality can far surpass that of humans.

AI systems have been used in the medical field to analyse different types of images, for example to help recognize cancers. Unlike doctors, who maybe see a few thousand of such images in their careers, an AI system can analyse millions in practically no time. Reinforced learning methods, where feedback is provided to the system, have led us to the point that AI is on par, or even surpassing real human professionals. For now it cannot replace a real doctor’s judgement, but the value of such a ‘tool’ is hard to underestimate.

Even in the iGaming industry there are many applications possible, it could be an effective tool to help analysing and proposing strategies for player retention, or even assist with coming up with the odds, another notorious data intensive process. These situations are very similar to for example (stock) trading, where all kinds of different information are relevant, from news sentiments, to chatter on social media.

The Dark side of algorithms

Although we have only mentioned algorithms a few times throughout this article, they are at the core of any AI system. They are the step-by-step instructions by which an AI learns, reasons and makes decisions.

The biggest risks with algorithms are the way they are designed, and the way they are trained. Unintentionally biased information and reasoning could have been used, and this could lead to disastrous outcomes.

A self-learning algorithm used by the Dutch tax authorities is at the core of a scandal in which tens of thousands of people were heavily penalised over false suspicions of fraud. For over 6 years people have been wrongly labelled as frauds, leading to fines that could surpass €100,000 in some cases. Not only that, it was also responsible for children being taken away from families and placed into foster care.

This shows that human intelligence is still crucial in many applications of AI. We cannot blindly rely on systems designed by humans that make mistakes. Ironic as it may sound, we rely on humans to learn from those exact mistakes and help to teach AI – for it in itself has many things to learn.